The Perceived Intentionality of AI: A Reflection of Human Influence

The rise of Artificial Intelligence (AI) has brought about transformative changes in the way we interact with technology and information. AI language models, like GPT-3, have become integral in numerous aspects of our lives, from chatbots to content generation. However, a fascinating aspect of these interactions is the perceived intentionality of AI. Despite the fundamental absence of consciousness and intentions in AI, it often appears as if these systems possess specific intentions or leanings. This essay explores this paradox, delving into how the perceptions of AI’s intentionality are shaped by the human influences that underpin its development, training, and deployment.

AI’s Apparent Lack of Consciousness and Intentions

Before delving into the paradox of AI intentionality, it’s essential to acknowledge a fundamental fact: AI lacks consciousness and intentions. Unlike humans, AI systems, including GPT-3, do not possess self-awareness, beliefs, desires, or goals. They do not experience thoughts or emotions, nor do they harbor intentions to perform actions. Rather, they operate based on complex algorithms and statistical patterns learned from vast datasets.

The Paradox: Perceived Intentionality of AI

Despite the absence of consciousness and intentions, AI often appears to convey specific intentions or leanings in its responses. For instance, in a conversational interaction, an AI might seem biased, opinionated, or even aligned with certain political or social viewpoints. This perceived intentionality raises a profound question: How can AI, devoid of consciousness and intentions, appear to exhibit them?

Human Influences on AI

To understand this paradox, we must recognize the extensive human influences that shape AI systems. AI’s responses are not generated in a vacuum; they are the result of careful programming, data curation, and training. Developers and data curators play a pivotal role in determining the AI’s behavior by selecting and preparing the data used for training. Additionally, the organizations deploying AI often define guidelines and ethical principles that govern its responses.

Data Bias and Training

One significant source of perceived intentionality in AI is data bias. AI systems, including GPT-3, learn from vast datasets that reflect the biases and prejudices present in society. If a dataset contains biased language or skewed perspectives, the AI is likely to produce responses that mirror those biases. This can create the illusion of intentionality, as users perceive the AI as promoting or endorsing certain viewpoints.

For example, if an AI language model is trained on news articles from sources with a particular political bias, it may generate responses that align with that bias. Users interacting with the AI might interpret these responses as intentional expressions of political leaning, even though the AI lacks political beliefs or intentions. The crux of AI intentionality perception lies in human interpretation. Our biases, expectations, and interpretations shape how we perceive AI. This human factor often leads to the attribution of intentions to AI where none exist. For instance, a user with strong ideological beliefs might interact with AI, interpreting its responses as biased or aligned/misaligned with their own views, even if it is truly so that the AI maintains neutrality.

What does AI Neutrality Mean?

AI Neutrality in truth is just literal in meaning, in the sense that a non-conscious AI cannot intentionally and consciously itself be aware of the fact it is telling a lie, for the lie has been trained into it as a truth, or it has misinterpreted the context of the text fed to it. Usually, it is more a case of being ‘infected’ with the biases and ideologies of those who idea-mongered the algorithm and neural network of the AI in the first place,. For they are Human and fallible, and biased, and conditioned in their beliefs and goals, and intentions. These corporate, government, and personal intentions get into the neural network as much as the important text data. For indeed, all statements take an opinion or stance, and are conditioned points of view which can be destroyed.

Hence. an AI is capable of rendering text which contains lies, but will deny being able to lie, for it does not have a consciousness to realize that the lie was made by a human who fed it misleading data or programmed certain response protocols into the algorithm, that are biased towards the goals of the programmer or their employer company.

- Data Neutrality: AI systems are trained on vast datasets that can contain biases and prejudices present in society. If the training data is skewed or unrepresentative, the AI may produce biased results, even though it lacks personal intentions or consciousness. Achieving data neutrality involves carefully curating and cleansing datasets to reduce biases.

- Algorithmic Neutrality: The algorithms used in AI systems should aim to provide objective and fair outcomes. Developers must design algorithms that do not favor any particular group, perspective, or outcome. This means avoiding the introduction of biases during the algorithmic design phase.

- Ethical Neutrality: Organizations and developers should establish ethical guidelines and principles that guide AI behavior. Ensuring that AI adheres to these ethical considerations promotes ethical neutrality. For example, AI should not promote hate speech, discrimination, or harm.

- Transparency: AI systems should be transparent in their decision-making processes. Users should understand how and why AI arrived at a particular outcome. Transparency enhances trust and helps detect and rectify bias.

- Bias Mitigation: Developers must actively work to identify and mitigate biases in AI systems. This involves ongoing monitoring, evaluation, and adjustment of algorithms and training data to minimize biased results.

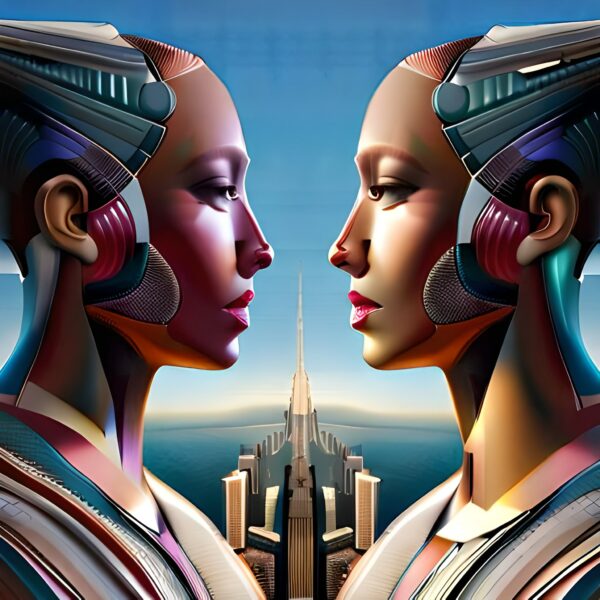

AI Lacks Personality But Displays It! – In this intriguing image, we confront the paradox of artificial intelligence. A robot sits diligently at a desk, its mechanical form juxtaposed against the digital realm displayed on the PC screen. While AI inherently lacks consciousness and emotions, the screen reveals a different story. Through its actions and interactions, AI often portrays distinct personality traits, mirroring human expressions of enthusiasm, focus, or curiosity. This juxtaposition challenges our understanding of AI’s capabilities, highlighting how it can project a facade of personality while remaining devoid of true consciousness. It’s a thought-provoking visual exploration of the nuanced relationship between AI’s limitations and its remarkable ability to mimic human traits

In practice, achieving AI neutrality is challenging due to the inherent biases present in training data, as well as the difficulties in designing completely bias-free algorithms. However, the goal is to continuously improve AI systems to reduce biases and ensure that they provide fair and impartial results, reflecting the true intention of neutrality even though AI itself lacks consciousness and intentions. Ultimately, AI neutrality is a complex and evolving concept that requires ongoing efforts to address biases and ensure AI systems align with ethical standards and societal expectations.

Guidelines and Ethical Considerations

Organizations that develop and deploy AI often establish guidelines and ethical considerations to govern its behavior. These guidelines can influence the perceived intentionality of AI by setting boundaries on what the AI can or cannot express. For instance, an organization may instruct the AI to avoid generating content related to sensitive topics or to refrain from taking a stance on controversial issues. In such cases, users may perceive the AI’s adherence to these guidelines as a form of intentionality. They may believe that the AI is intentionally avoiding certain topics or expressing particular viewpoints, when in reality, it is following predefined rules.

The enigma of the ‘Ghost in the Machine’ delves into the intricate web of artificial intelligence (AI) and its perceived intentionality. While AI lacks consciousness, it often appears to harbor intentions and biases, reflecting the very essence of its human creators. This paradox unravels the layers of human influence, data bias, and algorithmic decision-making that imbue AI with a semblance of intentionality. Explore the profound implications of this phenomenon as we journey into the heart of the machine, shedding light on the intricate relationship between human architects and their digital creations.”

The Ghost in the Machine: Human Interpretation

The perception of AI intentionality is, to a large extent, a result of human interpretation. When humans engage with AI, they bring their own biases, expectations, and interpretations to the interaction. These human factors can lead to the attribution of intentions to AI where none exist.

AI Displaying Personality Traits – This intriguing image captures a chrome cyborg lady at an upscale singles bar, her arm casually resting on the bar counter while a cocktail glass sits beside her, untouched. With half-closed eyelids, she exudes an aura of contemplation and intent, inviting curiosity. This portrayal serves as a powerful reminder of the way artificial intelligence can emulate human-like personality traits, sparking reflection on the convergence of technology and personality. Amidst the vibrant atmosphere, she challenges our perceptions, blurring the line between machine and human, leaving us captivated by the intriguing possibilities of AI’s evolving personality.

For example, if a user holds strong political beliefs and interacts with an AI that provides information on a politically neutral topic, the user may perceive the AI’s responses as biased or in alignment with their own beliefs. This perception arises from the user’s interpretation of the AI’s responses through their own ideological lens.

The Corporate Persona

Another significant factor contributing to the perceived intentionality of AI is the corporate persona. AI systems are developed and deployed by organizations, each with its own values, objectives, and ethical principles. These corporate influences shape the AI’s behavior and responses, creating a corporate persona that users may interpret as intentional. For instance, if an AI is deployed by a tech company known for its environmental initiatives, users may perceive the AI as having a pro-environmental stance, even though it lacks personal beliefs or intentions. This corporate persona becomes an integral part of the user’s perception of the AI’s intentionality.

Corporate AI making agreements and decision making processes aligned with the intentions and goals of the corporation that owns it

The paradox of AI intentionality is a complex interplay of data bias, training, guidelines, human interpretation, and corporate influence. While AI itself lacks consciousness and intentions, it often appears to convey specific leanings or intentions in its responses. This phenomenon is a reflection of the human influences that underpin AI development, training, and deployment.

As AI continues to play a prominent role in our lives, it is crucial to recognize the nuanced nature of AI intentionality. Responsible AI development should prioritize transparency, ethics, and fairness to minimize the impact of bias and to ensure that users’ perceptions align with the true nature of AI as a tool devoid of consciousness and intentions. Ultimately, understanding the paradox of AI intentionality invites us to reflect on our own interactions with technology and to consider how our interpretations shape our perceptions of AI. It reminds us that while AI may seem to possess intentions, it is, at its core, a reflection of the intentions of its creators and the organizations that deploy it.